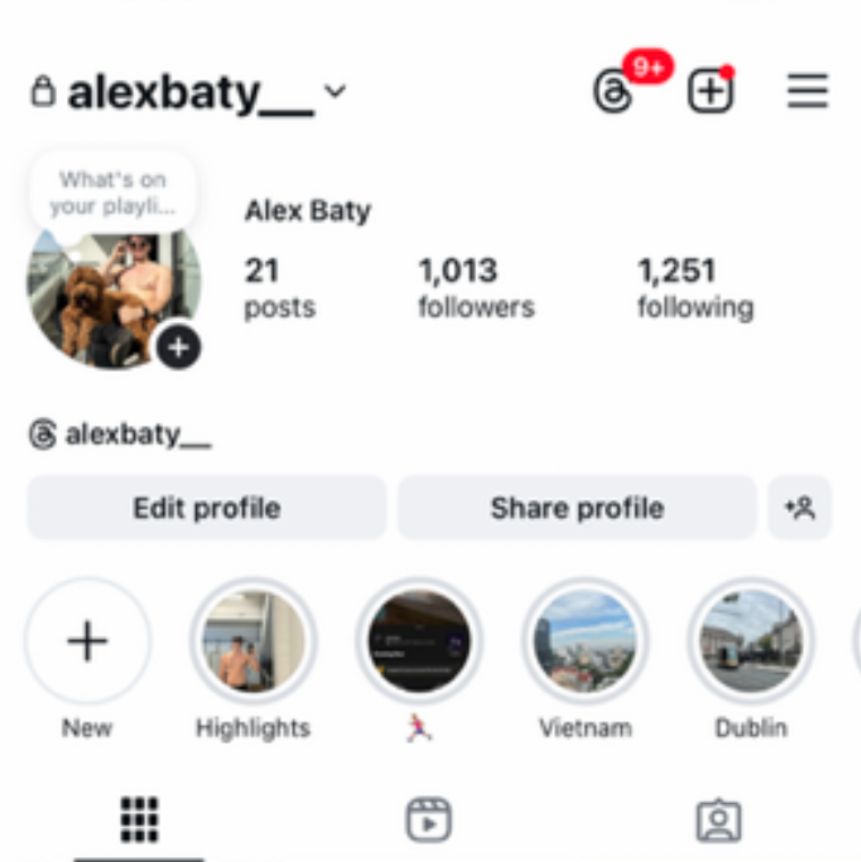

Meta had a very different idea about a simple, innocent moment. Alex Baty, a 26-year-old insurance broker from New Zealand living in Australia, posted a photo of himself shirtless on his balcony with his dog sitting in his lap. Nothing to see here, right? Just a guy enjoying some sun with his dog. But just hours after sharing this harmless pic, Baty found himself facing a ban from Instagram for allegedly violating Meta’s guidelines on child sexual exploitation, abuse, and nudity.

RELATED: Sibling Order and Sexuality: How Birth Position Shapes Who You Are

The Calm Before the Banstorm

In October, Baty switched up his Instagram profile picture to that now-infamous balcony snap. And just hours later, his account was banned for allegedly violating Instagram’s guidelines on child sexual exploitation, abuse, and nudity. The timing of the ban made Baty believe that the image with his dog was the issue.

RELATED: Tom Daley’s Underwear Workout Is Giving Us More Than Just Thirst

“The photo I was banned for is very tame. I’d show my Nana that image,” Baty told Stuff. But apparently, that wasn’t enough to save him from Meta’s overzealous moderation.

Appeals and Apologies: The Social Media Rollercoaster

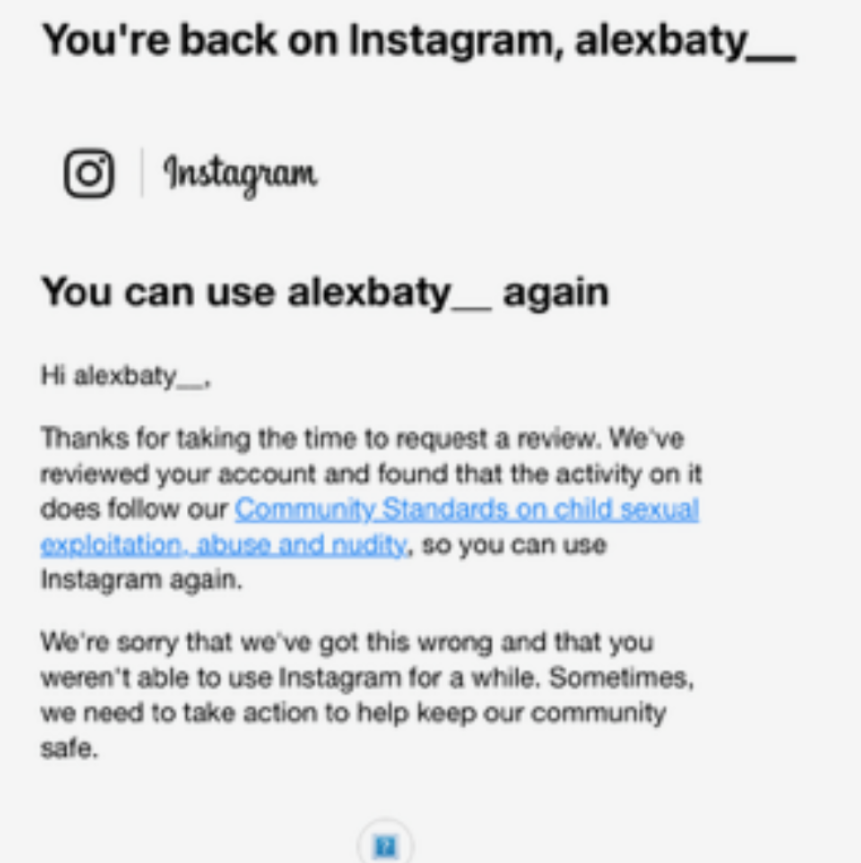

Naturally, Baty appealed the decision, and after a few weeks of radio silence, his account was reinstated with an apology from Meta. But the relief didn’t last long. A week later, his account was banned again—not just Instagram, but across all Meta platforms. At this point, even the most dedicated social media user would be wondering, “What did I even do?”

Baty, now exasperated, turned to every available channel to restore his account, including paying for Meta Verified. But instead of getting help, he was just told to wait for the appeal process. Sounds like a customer service horror story we all know too well.

Is Meta’s AI Out Here Ruining Vibes?

Here’s where things get wild: Baty suspects that Meta’s AI might have flagged his account after he deleted the photo. While Meta doesn’t give specifics on how its moderation works, we know that AI tools are responsible for scanning millions of photos every day. And sometimes, they get it wrong. Really wrong.

According to Otago University senior lecturer Michael Daubs, AI is supposed to flag “problematic” content like nudity, but it’s not foolproof. AI can mistake a harmless image for something far more scandalous, like an apple being mistaken for a nude body part (seriously, it happens). And let’s be honest, when you’re scanning that many photos, human intervention might be too late.

The Hypocrisy of Meta’s Moderation

What frustrates Baty the most? The sheer inconsistency in Meta’s moderation. “There are very explicit photos from all sorts of walks of life, you know, people who monetize off Instagram, like OnlyFans,” Baty said. “It’s just crazy. It’s just a little photo of me and my dog.” And he’s got a point. If you’ve ever scrolled through Instagram’s “Explore” page, you know there’s some wild stuff out there. But a shirtless pic with a dog? Apparently, that’s too much to handle for Meta’s AI.

In Conclusion: AI, Please Sit This One Out

So, what did we learn? Well, Meta’s moderation tools need a little more training (and a lot more consistency). And while Baty’s ban might’ve been the result of an algorithmic mistake, it’s clear that the appeal process isn’t doing much to help those who fall victim to it. Until Meta gets their AI situation sorted, maybe it’s time for us to rethink posting any shirtless dog pics. But hey, if you do—at least you know you’re not alone in this algorithmic madness.

SUGGESTED: Best AI Boyfriend Apps for Connection and Roleplay

Alex here (The guy who got banned)

The first ban was also banned from all meta apps not just the second ban.

The second ban appeal was denied within 10 minutes, which means it was a PERMANENT ban meaning I could never access Meta apps (80% of social media apps) ever again. I had made new accounts on my work phone, this got banned within hour due to affiliation with the first account, insane.